AI First, Thinking Second? Why Shopify’s Memo Misses the Point

Shopify’s AI push treats tools as strategy. Real ops value comes from clarity, process, and leadership.

Is The Sky Falling?

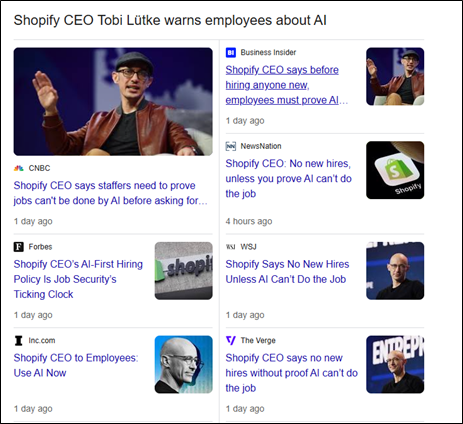

On March 26, I wrote: “Seeing a lot of Ops leaders hyping up the ‘AI-first workplace’ — that’s like a construction manager saying ‘Hammer-first building site.’ Great, you’ve got a new tool, but who’s going to read the blueprints?”Fast forward a week, and Shopify’s CEO, Tobi Lütke, mandated an “AI-first” culture across the company. (Maybe I should play the lotto), In his internal memo, leaked on April 7, he described a full-court press toward AI integration into the Shopify workforce: mandatory tools, performance review criteria, and a presumption that AI should handle most tasks before a new headcount is even considered.

The major takeaway from the memo is that a CEO with a significant market share (among the top e-commerce platforms worldwide) is putting AI above new talent. Jarring as it may be, all he is doing is saying the quiet part out loud. This has been a fear of the workforce for the last 2 years; “AI is coming” has been the sound bite heard across newspapers, happy hours, and even among couples. To many, this memo marks the end. To me, it can be a study of nuance, context, and preparation.

I will admit the memo is bold. It’s provocative. It raises one massive question: “What happens when the tool becomes the strategy?”

When Tools Become Doctrine

AI is a force multiplier, not a force maker. In my last post, “AI Won’t Save You from Drowning in Work, but These 3 Concepts Will,” I laid out what AI can’t do — critical thinking, process clarity, and outcome orientation. These are the foundations of operations.

Let’s talk about what AI can do — and why that still doesn’t make it doctrine.

AI can draft content, automate repeatable tasks, surface patterns, and cut down time on routine work.

AI can’t define what matters, align a team, or build the scaffolding that holds an operation together.

AI can help you move faster, write drafts, and find connections in data.

AI can’t tell you what’s important, help your team agree on the next steps, or build the habits that keep things running.

AI can support decisions by summarizing options or filling in gaps.

AI can’t set priorities, resolve tradeoffs, or create the trust needed to move forward.

In full transparency, AI is helping me write this post. It’s fantastic at drafting ideas, sharpening sentences, and speeding things up. But it didn’t come up with the concept. It didn’t create the narrative. And it definitely doesn’t understand the nuance of this piece.

The gaps are glaring if you rely solely on AI, not humans, to use them. Shopify’s memo assumes that if everyone uses AI, productivity will naturally follow. A better example is if you were to buy 100 hammers and only 10 workers to use them, what would you be accomplishing? You are spending more money on tools than an extra set of hands to help. You aren’t being clever, just amplifying confusion at scale.

You can’t shape entrepreneurship in an AI-driven world if no one agrees on what the work is or why it matters.

A lot of this isn’t new. OCR (optical character recognition), ETL (extract, transform, load), and ML (machine learning) have been streamlining back-office work for over a decade.

What the Evolution of the AI Workplace Should Actually Look Like

AI is here. I’m not a boomer clinging to the idea that it’ll never replace human output. Entry-level jobs are absolutely at risk. Repetitive overseas scut work? Already on borrowed time. But let’s get real about what we’re actually seeing in the workplace. A lot of this isn’t new. OCR (optical character recognition), ETL (extract, transform, load), and ML (machine learning) have been streamlining back-office work for over a decade. These aren’t glamorous breakthroughs. They’re technical, behind-the-scenes tools that quietly make things faster and cheaper. What large language models have done is put a shiny interface on top of all that and pushed it into the mainstream. It feels new, but it’s mostly an acceleration of what’s already been happening.

What made those earlier tools work was how they were adopted. Large enterprises didn’t chase features. They built around systems. The tech served the structure, not the other way around.

Start with systems, not features

It’s not about which tools you use. It’s about why and when you use them. Systems thinking means asking how work flows across a team, not just how to speed it up in one corner.

Build the blueprint before the stack

Clear process always beats clever tech. You cannot automate chaos. Without a shared understanding of how things get done, AI just creates more noise, faster.

Focus on sequence, not speed

Ops is not about doing things faster. It is about doing the right things, in the right order, at the right time. AI can support that, but it cannot replace the thinking required to make it work.

The future of work is not AI-first. It is system-first, with AI in the passenger seat, not the driver’s.

This approach requires the same three core principles I mentioned earlier. And yes, AI will accelerate them. It will also replace parts of human work. I know I sound like a broken record, but that doesn’t make it less true.

The Shopify memo includes a few encouraging signals. The company provides tools like chat.shopify.io, Copilot, Cursor, and Claude Code. It also expects employees to share both their AI successes and failures internally. That is a solid principle for building an AI-adopted workforce. Adopted, not driven.

But the road to hell is paved with good intentions. Principles can be corrupted. Facebook’s "Move Fast and Break Things" motto started as a call to innovate. It ended up as a case study in collateral damage. No amount of positive PR can erase that. Even the best intentions will break under pressure if the operational backbone is weak.

Incentivizing the Wrong Behavior at the Leadership Level

During moments of uncertainty and technological change, leadership principles matter more than ever. People look up, not just for strategy, but for signals on how to behave.

Mandating AI use, tying it to performance reviews, and baking it into headcount decisions might seem bold, but it sends the wrong message. It tells teams that tool usage matters more than judgment. That visibility matters more than outcomes. Following directions is safer than asking hard questions.

Strong leadership in times like this means holding the line on what makes work effective: clarity, alignment, and trust. It means creating space for experimentation without forcing adoption. It means asking whether a tool makes the work better, not just faster.

When leaders chase adoption metrics instead of operational value, the culture tilts. People stop thinking. They start complying. And that is when good ideas turn into bad systems.

What the Right Kind of Adoption Looks Like

If leaders want AI to actually make work better, not just louder, the approach has to shift from mandates to stewardship. Good adoption is thoughtful, transparent, and rooted in real use cases. Here's what that looks like:

Stay in the loop

Listen for new tools. Take the demos. Ask questions. And what I tell every operator — try to break the tool. That’s how you find out if it’s actually useful. The AI landscape is full of snake oil salesmen. Most tools are solving niche problems dressed up as universal solutions. Be curious, but be skeptical.

Look for real use cases

Don’t start with the tool. Start with the problem. See where people are already solving something with AI. Look at what they’re trying to avoid, what they’re improving, and what still feels clunky. Use that to guide adoption. You don’t scale hype — you scale what works.

Map where AI can help

Break down workflows and verticals. Look at the boring stuff first — tagging, summarizing, drafting, flagging. That’s where most of the lift is. You don’t need a company-wide rollout. You need a clear view of where AI plugs in without breaking things.

Build a roadmap, not a mandate

Adoption needs a plan. Set expectations for exploration. Track what’s being tried, what’s showing results, and what needs another pass. Treat AI like a product feature — test it, learn from it, improve it. Do not treat it like a policy to enforce.

Be transparent

Say what you’re learning. Say what you’re uncertain about. Let teams in on the process so they know this isn’t theater. Adoption only works if people trust that the goal is better work — not just new tools.

The future of AI in the workplace will be built by leaders who make room for curiosity, clarity, and feedback — not fear of falling behind.

In the Rush to be More Artificial, Don’t Forget to be More Intelligent

Shopify’s memo might be the new standard. Or it might just be loud. Either way, it’s not a strategy. AI is not the problem. Misusing it is. Misleading people about what it can do is worse. The leaders who will thrive in this moment are not the ones mandating tools. They’re the ones building systems that make tools useful. They’re the ones creating trust, not pressure.

So no, the sky is not falling. But if you confuse noise for progress, it just might cave in.

Great Read!!!